What do hundreds of thousands of dollars and four years of full-time programming in the computer science program at a prestigious Research I institution get you?

A lot of things — except a portfolio.

It’s no secret that there’s a serious shortage of computing professionals at the moment. You’re probably used to hearing that yet another friend has decided to double major or minor in computer science.

Your friend might be disappointed to realize, however, that not only will they learn outdated technologies, they won’t even be able to include class projects in their portfolio due to academic honesty policies.

Then what’s the alternative? Well, there are coding boot camps, which provide expedited programming courses that cost a fraction of the time and money that universities do — one quarter for $11,000 on average. They teach technologies that graduates will use in the industry with project-driven curricula that mirror apprenticeships.

But boot camps aren’t a perfect solution. They suffer from a volatile market, inconsistent quality control, and poor fundamentals in data structures and algorithms. Employers still prefer college graduates over coding boot camp students for many positions.

So how do undergraduate CS programs fare in comparison? Even though the majority of graduates claimed to have learned soft skills, they weren’t able to give specific examples, unlike boot camp grads. Not only that, but a meager fifth of the CS grads worked on collaborative industry projects, in comparison to three quarters of boot camp students.

Camp or college — neither option is ideal. Your choice is to either pay the college tuition premium for a traditional yet impractical curriculum, or put your trust in coding boot camp companies which promise quick results, but are also quick to go bankrupt.

What we need is the best of both worlds: a college CS curriculum that incorporates the tactics of coding boot camps.

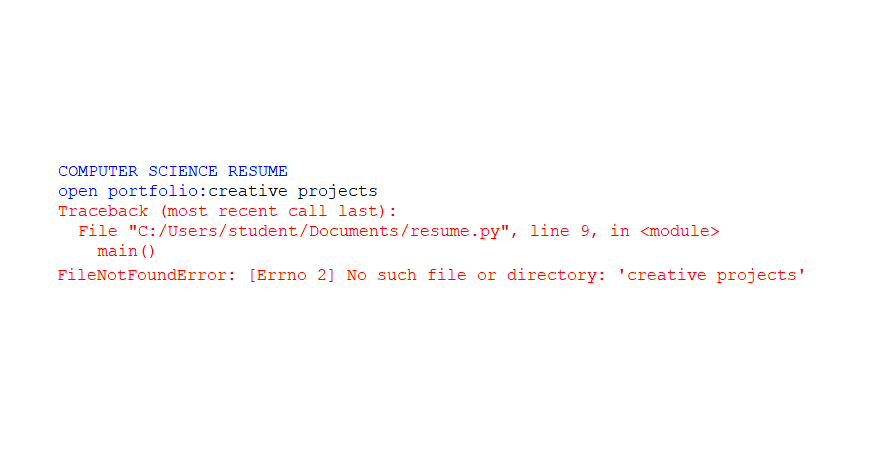

More courses should revolve around creative projects, instead of exams or run-of-the-mill assignments where every student writes the same code. This simple change lets students put their school projects in their portfolios without violating academic honesty policies.

And decades-old technologies need to go. No programmer in 2020 should be learning desktop Java graphics or the quirks of C99 (which I like to call C circa 1999). I understand the desire to teach students how things “really” work under the hood, the gory details of the flesh and bones of a technology. However, the whole point of computer science is to hide away unnecessary details through automation.

We can and should teach the same core principles using newer and simpler tools. MIT and Princeton teach Python, the simplest mainstream programming language out there, and we know those universities wouldn’t skimp on computer science fundamentals.

The lone programmer stereotype is a myth. Cooperation is a necessity for modern computing jobs, and classes should be tailored to that reality. Classes should emphasize collaborative software development practices from day one, like popular project management strategies, shared code with version control, and shared documentation.

CS curricula could even culminate in a more practical, boot camp-style course instead of a traditional research-oriented senior project. There are already bootcamps that help CS graduates get prepared for the industry. Why can’t it be done in universities?

The answer: It can.

Lecturers at Brandeis University taught a boot camp-style intensive summer program on web and mobile software development that didn’t skimp on theory or practice. It included a collaborative startup-style product launch, taught relevant web and mobile technologies, and introduced students to the Agile project management technique and test-driven development strategies, widely used by startups. Students also used the industry-standard Git for collaborative version management while freely utilizing open-source projects, as companies routinely do.

It echoes all of my suggestions above, and it works; students remarked that the course was “transformative,” and they engaged in noticeably more entrepreneurial software development after completing the course.

Such a course takes more time investment from faculty to design and deliver than rehashed traditional lectures. But to settle for a mediocre curriculum isn’t the spirit of a University devoted to doing “ever better.”

Only when University curricula adapt to the demands of the real world will we have computing education that is quality-controlled, relevant, and which stands the test of time.